Fake faces, real risks: The growing threat of deepfakes to business security and trust

In the world of trillion-dollar companies and media corporations, the ascent of deepfake technology presents a potent and escalating menace to business and trust, with billions at stake.

Recently, scammers managed to steal over 25$ million from a Hong Kong company. When the employee received a message from an unknown email of a client, he suspected it may be a phishing scam. Though when he then entered the zoom call and saw faces he knew, he dropped his guard and made the transactions. Little did he know, none of the faces he was seeing were real.

“I see Deep fakes as a threat,” says Hannes Cools. He is a researcher in AI at the Amsterdam University. "There is definitely a risk that companies and news organizations do not detect deep fakes because they can surpass protection tools.”

Deepfakes have seen a dramatic increase in recent years.

Trendmicro, a cybersecurity firm, have been following the threat for years. In 2020, they asssesed that the “rise of deepfakes raises concern: It inevitably moves from creating fake celebrity pornographic videos to manipulating company employees and procedures.”.

Just a few years later, their assessment now is that “generative AI have made phishing virtually effortless with error-free and convincing messages as well as persuasive audio and video deepfakes”.

Onfido, a London based AI start-up has found that fraudulent attacks using deepfakes have increased by 3000% in 2023. Sumsub, a global verification platform, now puts deepfake as 5,9% of all fraud in the UK in 2023, a jump from 1.2% in 2022

These statistics highlight the growing threat of deepfake technology in fraud and scams. Fraudsters and malicious agents are capitalising on the ease of use of deepfake technology while leveraging AI to commit identity fraud, manufacture fake IDs, or impersonate others over the phone or online meetings.

“There really isn’t any good way to defend against it. Overarching standards like the ones coming from the EU and introducing Metadata authentification can help,” Cools says. “But at the end of the day, the technology is developing so fast that it is very hard to tell where the technology will be at in a few years.”

A few years ago, visual deepfakes in videos first made an appearance in the mainstream in the infamous Obama Deepfake from Jordan Peele. That video required a team of experienced VFX artists working for weeks to create it.

“Deepfakes can easily trick people by making it look like someone from a company is doing or saying something they aren't,” says AItrepreneur, a youtuber focused on cutting-edge AI tech and respected figure in the scene with over 140.000 subscribers.

Even though the technology is so new, it’s already available for everyone, as AItrepreneur exemplifies by creating software kits which make complex programs like stable diffusion easy to install on every PC and use “in a matter of minutes”.

AItrepreneur himself often warns his audience about new exploits and scams, however his kits do not have any safeguards against potential malicious uses.

Convincing deepfake videos are easy to execute nowadays with anyone with a decent laptop, as shown in this video.

“This is a danger for business, yes, but I am more worried about the effect of this on journalism and trust.” Cools says. “Companies like the BBC are well aware of the risk, and the EU is working to pioneer legislation to make the fight agains misinformation easier.”

“The question is only when media companies will reach a point where they can efficiently fight agains this.” He adds. “The trust in media companies is already eroding and the media literacy right now is just frankly too low for the amount of risk and misinformation that is around.”

There are ways to combat deepfakes and make companies more secure, and it’s increasing literacy in these fields, says AItrepreneur. “Companies can fight deepfakes by teaching everyone about them, keeping their cyber security strong, checking who's who more carefully, and having a plan ready if deepfakes are indeed used against them. “

“Businesses will need to not only train employees in best practices for detecting inauthentic audio, photo, and video content, but also establish clear protocol for verification and trusted sources of content authentication,” agrees Andrew Lewis, a leading researcher in the field, working for Oxford at the moment.

A new research paper by Lewis examined how proficient people are at spotting deepfakes. He and his team found that, even when people are told that one video in a collection will be a deepfake, only about 21.6% of people are able to correctly spot the deepfake video.

"Manual detection abilities in the general public are rather low," he says. When confronted with subtle deepfakes in a natural environment, the study shows, most are not able to spot them.“

“The greatest danger we face is that these technologies further fracture a sense of shared reality such that trust in all media both fake and real is undermined,” he adds, agreeing with Cools. However, he sees a clear effect on business from this as well: “This will place a huge burden on businesses if even basic interactions must be treated sceptically and as potentially malicious”.

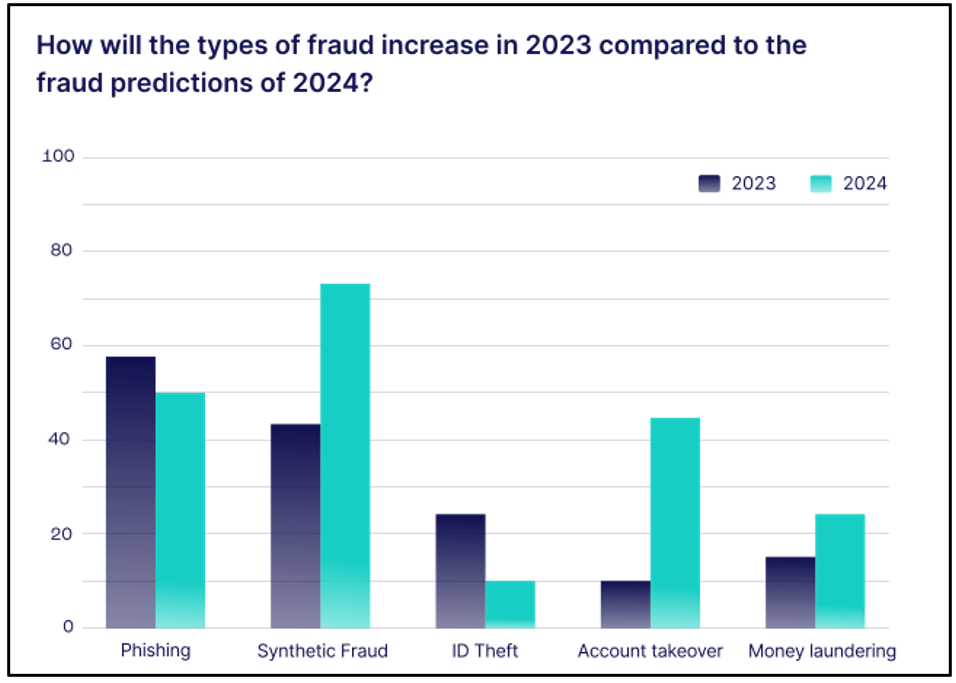

A 2021 study by the University of Portsmouth Centre for Counter Fraud Studies, estimated fraud to costs UK companies £137 billion a year, with statistics from SEON showing that synthetic fraud (which deepfakes are a part of) expected to be the fastest growing fraud type, leading to billions in damages.

Looking towards the future, Lewis says “others have described the current moment as an arms race between synthetic content generation and detection technologies. In that sense, the generators are currently winning, as the potential gains to malicious actors generating synthetic content are greater than the current resources devoted to detection capabilities.”

Post a comment